How do we turn AI from an educational shortcut to a scaffold that helps students learn?

Rosie Dutt, Teaching Assistant Professor & Director of the Undergraduate Neuroscience Career Development at the University of North Carolina, explores this.

Dr. Dutt teaches interdisciplinary computational neuroscience courses, integrating engineering and data science concepts. Her background in communications, consulting, and entrepreneurship helps foster student success and alumni engagement through career development initiatives, connecting students with professional opportunities and strengthening alumni networks.

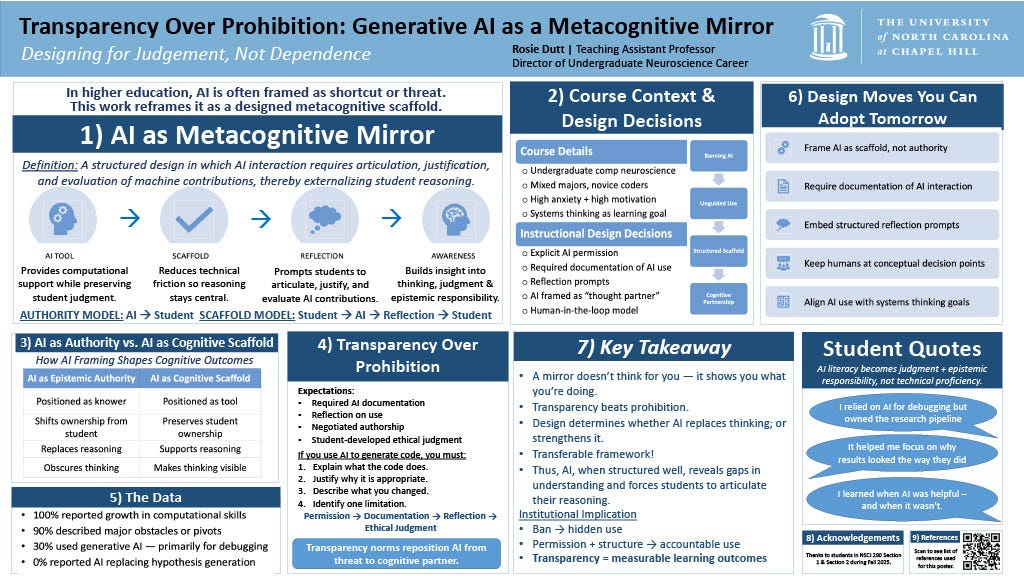

In higher education, AI is often framed as a shortcut or even a threat to learning. Yet, it can be more productively understood not as an authority replacing student thinking, but as a metacognitive scaffold—a designed tool that supports students in reflecting on their own reasoning.

This approach asks students to actively articulate, justify, and evaluate how they use AI’s contributions, shifting AI’s role from doing the thinking for them to helping them think more deeply. The AI tool provides computational support while preserving student judgment, reducing technical friction and prompting reflection.

I implemented this approach in an undergraduate computational neuroscience course with mixed majors, many of whom were new to coding. Students were explicitly asked to document their AI use and respond to structured reflection prompts. This transparency repositioned AI from a prohibited shortcut to a cognitive partner that strengthens learning.

The data showed that 100% of students reported growth in computational skills, though only 30% used generative AI, mostly for debugging. More importantly, students gained insight into when AI was helpful and when it wasn’t, developing judgment and epistemic responsibility rather than just technical proficiency.

This shift toward transparency (where students have permission but must document and reflect on AI use) creates ethical accountability and makes learning outcomes measurable, moving beyond the default impulse to ban AI outright.

The key takeaway is that transparency and structured design around AI use beats prohibition. AI doesn’t replace thinking, it shows students what they are actually doing, helping them articulate and strengthen their reasoning.

Ultimately, this framework offers a transferable way to integrate AI in education responsibly, supporting students as active thinkers rather than passive users.